Navigating the Unknown: Key Ethical Challenges and Biases in Artificial Intelligence

A Comprehensive Analysis of Algorithmic Fairness, Transparency, and Responsible Innovation

Published: March 2026 | Reading Time: 8 minutes

Executive Summary: As artificial intelligence systems increasingly influence critical societal decisions—from healthcare diagnostics to criminal justice sentencing—addressing embedded ethical challenges and algorithmic biases has become imperative. This analysis examines the landscape of AI ethics, categorizing major bias types, documenting real-world consequences, and presenting evidence-based mitigation strategies for responsible AI deployment.

I. Introduction: The Ethical Imperative

Artificial intelligence has transitioned from theoretical concept to omnipresent force, fundamentally reshaping decision-making processes across healthcare, finance, criminal justice, and employment sectors. While these technologies promise unprecedented efficiency and capability, they simultaneously introduce complex ethical challenges that demand rigorous examination.

Research indicates that AI systems can inherit and amplify biases present in training data, resulting in discriminatory outcomes that perpetuate historical inequalities [6]. The stakes are considerable: algorithmic bias poses risks of injustice, loss of autonomy, and erosion of accountability that exceed the capabilities of existing regulatory frameworks [8].

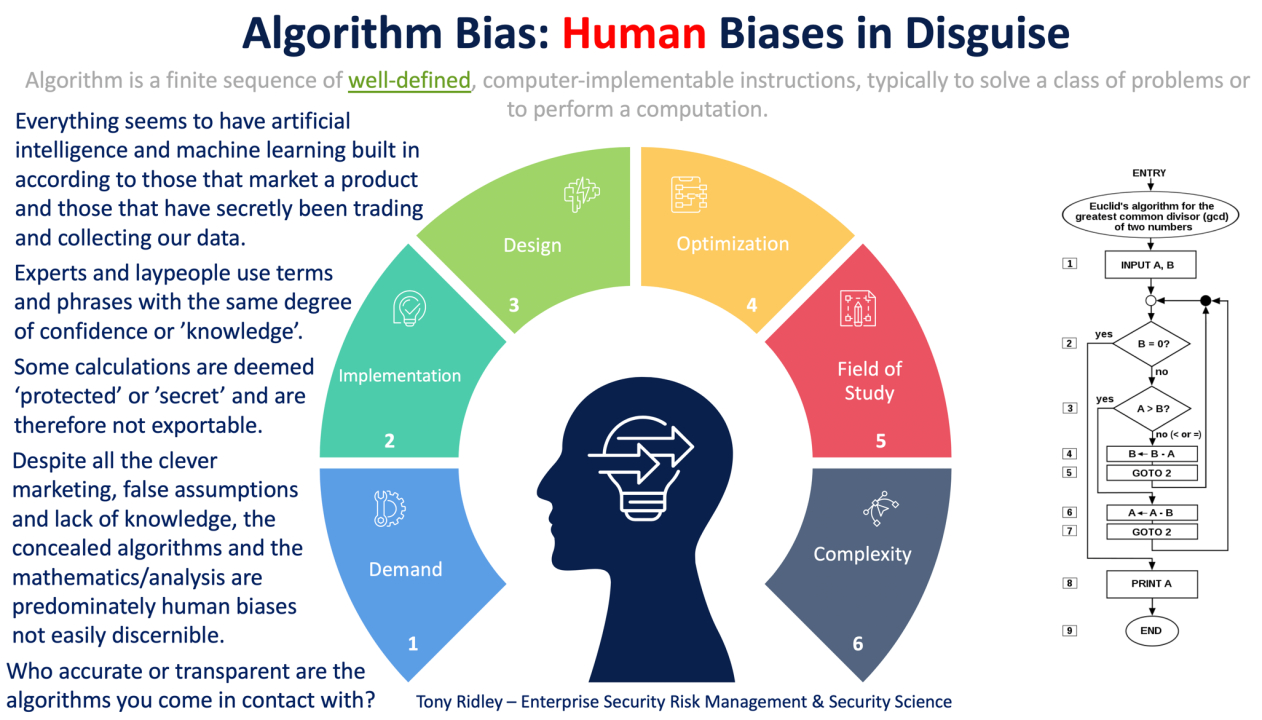

Figure 1: Overview of AI in Ethical Decision-Making Processes

II. Taxonomy of AI Bias

Understanding the mechanisms through which bias enters AI systems is fundamental to developing effective mitigation strategies. Researchers have identified three primary categories of algorithmic bias:

A. Input Bias

Input bias occurs when training data fails to represent diverse populations or contains embedded historical prejudices. When AI systems learn from data reflecting past discrimination, they systematically perpetuate these patterns. Facial recognition technologies, for instance, have exhibited significant accuracy disparities for women and individuals with darker skin tones, creating serious ethical concerns in security and healthcare applications [4].

B. Systemic Bias

Systemic bias emerges from the architectural design of AI algorithms themselves. The "black box" nature of many machine learning models creates opacity in decision-making processes, complicating efforts to identify discriminatory patterns. This opacity generates what researchers term an "epistemic problem"—the uncertainty of latent biases that may remain undetected until long-term deployment [8].

C. Application Bias

Application bias arises when AI systems are deployed in contexts divergent from their training environments. A model developed for one demographic may produce harmful outcomes when applied to different populations, particularly in healthcare where underrepresentation of minority groups can significantly skew predictive accuracy [5].

Figure 2: Classification of Algorithmic Bias Types and Manifestations

III. Case Studies: Documented Consequences of Algorithmic Bias

Theoretical concerns regarding AI ethics manifest in tangible harms affecting real populations. The following comprehensive table documents major verified cases of algorithmic bias across sectors:

| Sector | System/Case | Documented Impact | Source |

|---|---|---|---|

| Criminal Justice | COMPAS Recidivism Algorithm | Black defendants classified as high-risk for violent recidivism at twice the rate of white defendants with similar profiles [9] | ProPublica Investigation |

| Healthcare | Hospital Care Allocation Algorithm | System prioritized projected cost over illness severity; 18% of Black patients requiring additional care were systematically overlooked [2] | Research Study (2019) |

| Employment | Amazon AI Recruiting Tool | Resumes containing "women's" (e.g., "women's chess club") systematically downgraded due to training on male-dominated historical data [9] | Internal Audit |

| Financial Services | Mortgage Pricing Algorithms | Minority borrowers charged higher interest rates than white borrowers with identical credit profiles and risk assessments [2] | UC Berkeley Study |

| Education | UK Pandemic Grading Algorithm | Grades systematically lowered for state school students while inflating scores at wealthier private institutions [2] | Government Review |

| Child Welfare | Florida Risk Assessment Tool | Minority mothers flagged at disproportionately high rates for potential neglect based on neighborhood poverty proxies [2] | State Investigation |

Critical Insight: These cases demonstrate that algorithmic bias constitutes a systemic issue with profound societal implications, extending beyond individual harm to encompass broader impacts including the amplification of discrimination at unprecedented speed and scale [8].

IV. Core Ethical Challenges in AI Development

Beyond bias, artificial intelligence presents several interconnected ethical challenges requiring comprehensive governance frameworks:

Transparency & Explainability

The "black box" nature of deep learning models undermines accountability. This opacity challenges autonomy principles, as individuals cannot provide informed consent when decision-making processes remain obscured [8].

Privacy & Data Security

AI systems require massive datasets often containing sensitive personal information. Unauthorized access or misuse creates severe violations, particularly in healthcare where compromised data results in both financial and personal harm [7].

Accountability & Liability

Determining responsibility when AI systems cause harm presents complex legal challenges. The "responsibility gap" can leave victims without recourse, eroding public trust in AI technologies [6].

Autonomy & Human Control

As AI systems achieve greater autonomy, concerns regarding loss of human control intensify—particularly in applications like autonomous weapons and critical infrastructure management [6].

Figure 3: Primary Ethical Challenges of AI in Human Resources

V. Evidence-Based Mitigation Strategies

Addressing AI ethics requires a multi-layered approach integrating technical solutions, organizational practices, and regulatory compliance. The following framework represents current best practices:

Technical Interventions

- Algorithmic Debiasing: Implement fairness-aware methods such as adversarial debiasing to train models independent of sensitive attributes [4]

- Explainable AI (XAI): Deploy interpretability techniques to clarify decision-making processes and enable meaningful oversight [4]

- Data Diversification: Ensure training datasets represent broad spectrums of gender, ethnicity, and socio-economic backgrounds to prevent homogeneous data bias [7]

Governance & Organizational Practices

- Ethical Auditing: Conduct regular comprehensive audits to ensure compliance with established ethical principles and identify emerging biases [4]

- Stakeholder Engagement: Involve technologists, ethicists, legal experts, and affected communities throughout development and deployment phases [4]

- Cross-functional Review: Establish diverse ethics review boards to evaluate AI systems prior to production deployment

Regulatory Compliance

Organizations should adopt established frameworks such as the EU Ethics Guidelines for Trustworthy AI and ensure compliance with emerging regulations including the EU AI Act, which establishes legal standards for fairness, transparency, and accountability.

VI. Future Directions: Balancing Innovation and Ethics

The ethical challenges of artificial intelligence cannot be fully eliminated through technical solutions alone. Research suggests we must acknowledge residual ethical issues while working to maximize benefits and minimize harms [8]. This requires sustained collaboration between scientists, ethicists, policymakers, and civil society.

Strategic Priorities for Responsible AI

- Develop standardized benchmarks for measuring fairness and bias across diverse AI applications

- Create interdisciplinary educational programs training AI practitioners in ethical reasoning and social impact assessment

- Establish transparent incident reporting mechanisms for AI failures and near-misses

- Foster inclusive public engagement to ensure AI development aligns with societal values and human rights

- Implement continuous monitoring systems to detect bias drift in production environments

VII. Conclusion

Navigating the ethical challenges of artificial intelligence demands vigilance, interdisciplinary collaboration, and unwavering commitment to human dignity. From algorithmic bias that perpetuates historical discrimination to transparency deficits that undermine accountability, the risks are substantiated and immediate. However, through implementation of robust mitigation strategies, adoption of comprehensive ethical frameworks, and maintenance of transparent dialogue regarding limitations, we can direct AI development toward outcomes that benefit humanity equitably.

The journey toward ethical AI remains ongoing, and perfection may prove elusive. Yet as research demonstrates, transparency regarding unavoidable biases—combined with rigorous efforts to eliminate addressable inequities—offers the most viable path forward [8]. As we stand at this technological inflection point, our ethical choices will fundamentally define the future of human-machine collaboration.

References & Further Reading

[2] Obermeyer, Z., et al. (2019). Dissecting racial bias in an algorithm used to manage the health of populations. Science.

[4] Analytics Vidhya. (2023). Understanding Algorithmic Bias: Types, Causes and Case Studies.

[5] Nature Medicine. (2024). Ethical considerations of AI in biomedical research and healthcare.

[6] Springer. (2024). AI Ethics: Navigating the Intersection of Technology and Morality.

[7] Blockchain Council. (2025). AI and the Ethics of Decision-Making in Law and Policy.

[8] Springer. (2024). Ethical Issues of AI: Challenges and Recommendations.

[9] ProPublica. (2016). Machine Bias: Risk Assessments in Criminal Sentencing.

[11] The Guardian. (2025). AI 'will both reduce and increase' unfairness in society.

Additional Resources

Disclaimer: This article provides general information regarding AI ethics and should not be construed as legal or professional advice. Organizations implementing AI systems should consult with qualified ethics professionals and legal counsel to address specific compliance requirements.

© 2026 AI Ethics Review. All rights reserved.

If you don't understand, leave a comment